From hidden experiments to confident Gen AI adoption

In many organizations, Gen AI adoption has already started. Just not in a way leadership can clearly see, steer, or scale.

Employees experiment quietly with AI tools to summarize documents, draft emails, analyze data, or speed up daily work. These initiatives are rarely malicious or careless. On the contrary, they are often driven by curiosity, pressure to deliver faster, or genuine innovation. Yet taken together, these hidden experiments form a pattern that deserves attention: Shadow AI.

Shadow AI is not rebellion... it is a signal

Shadow AI is often framed as a risk to control. In practice, it is something else entirely: a signal that people are moving faster than the organization’s structures allow.

When official tools, guidance, or support lag behind expectations, employees fill the gap themselves. They test prompts, compare tools, and develop personal workarounds. This is a natural response to a rapidly evolving technology. The real issue is not that these experiments exist. The issue is that they remain individual, fragmented, and invisible.

When Shadow AI becomes a problem at scale

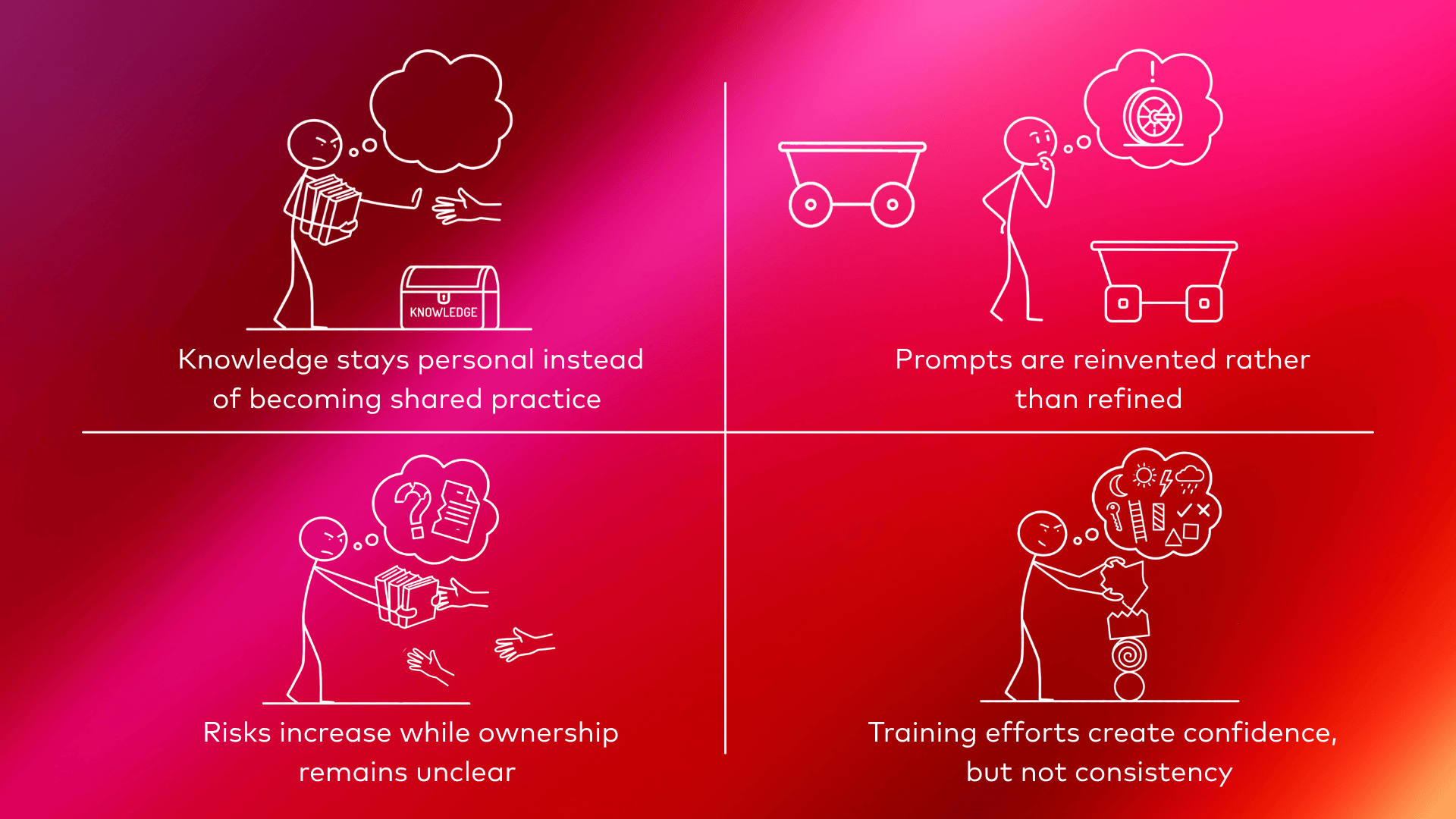

As long as Gen AI use stays small and informal, organizations tend to tolerate it. But the moment AI becomes part of daily workflows, Shadow AI starts to create friction:

- What looks like widespread adoption can quickly turn into a false sense of maturity. Organizations believe they are “doing Gen AI” because people attended a training or gained access to a tool; while in reality, usage remains uncoordinated and hard to build upon. At that point, Shadow AI is no longer just experimentation. It becomes a barrier to confident adoption.

The usual responses; and why they fall short

When organizations recognize this situation, their first reactions are predictable:

- Roll out additional tools

- Organize more training sessions

- Introduce stricter governance guidelines

Each of these has value, but none of them solve the underlying issue on their own. Tools without guidance don’t create alignment. Training without follow-up fades quickly. Governance without enablement leads to avoidance. What’s missing is continuous, practical guidance close to the work itself.

From control to confidence

Confident Gen AI adoption does not mean eliminating experimentation. It means making experimentation visible, shared, and purposeful.

That requires an organization to actively connect:

- individual use cases with collective learning

- experimentation with structure

- governance with day-to-day support

This is where many organizations reach a realization: adopting Gen AI is not a one-off change. It is an ongoing capability that needs ownership.

Enter the AI Coach

An AI Coach is someone who operates close to teams and workflows. Someone who understands both the technology and the business context. Someone who helps turn scattered experiments into reusable practices.

In practice, this means:

- Supporting employees with real, practical AI questions as they arise

- Helping identify recurring use cases worth scaling

- Translating experimentation into structured prompt libraries and patterns

- Guiding responsible use without slowing innovation

- Bridging the gap between governance intentions and daily reality

The AI Coach does not replace training or tooling. It makes both more effective.

Moving from hidden to intentional

Shadow AI is often the first sign that Gen AI matters to people’s work. But leaving it unmanaged is a choice. Organizations that want to move beyond isolated experiments need to invest not only in platforms, but in capability.

At Datashift, we increasingly see this pattern across organizations: Gen AI succeeds when there is someone embedded who helps teams learn together, align practices, and adopt with confidence. That is why we support organizations by providing AI Coaches who turn early experimentation into sustainable impact.

The question is no longer whether Gen AI is being used. The question is whether it is being adopted deliberately. From hidden experiments to confident adoption; the difference lies in guidance, not in tools.

.png)

.jpg)

.png)

.png)