The OpenClaw Symptom: Why Your Enterprise AI Strategy is Failing (and what to do about it)

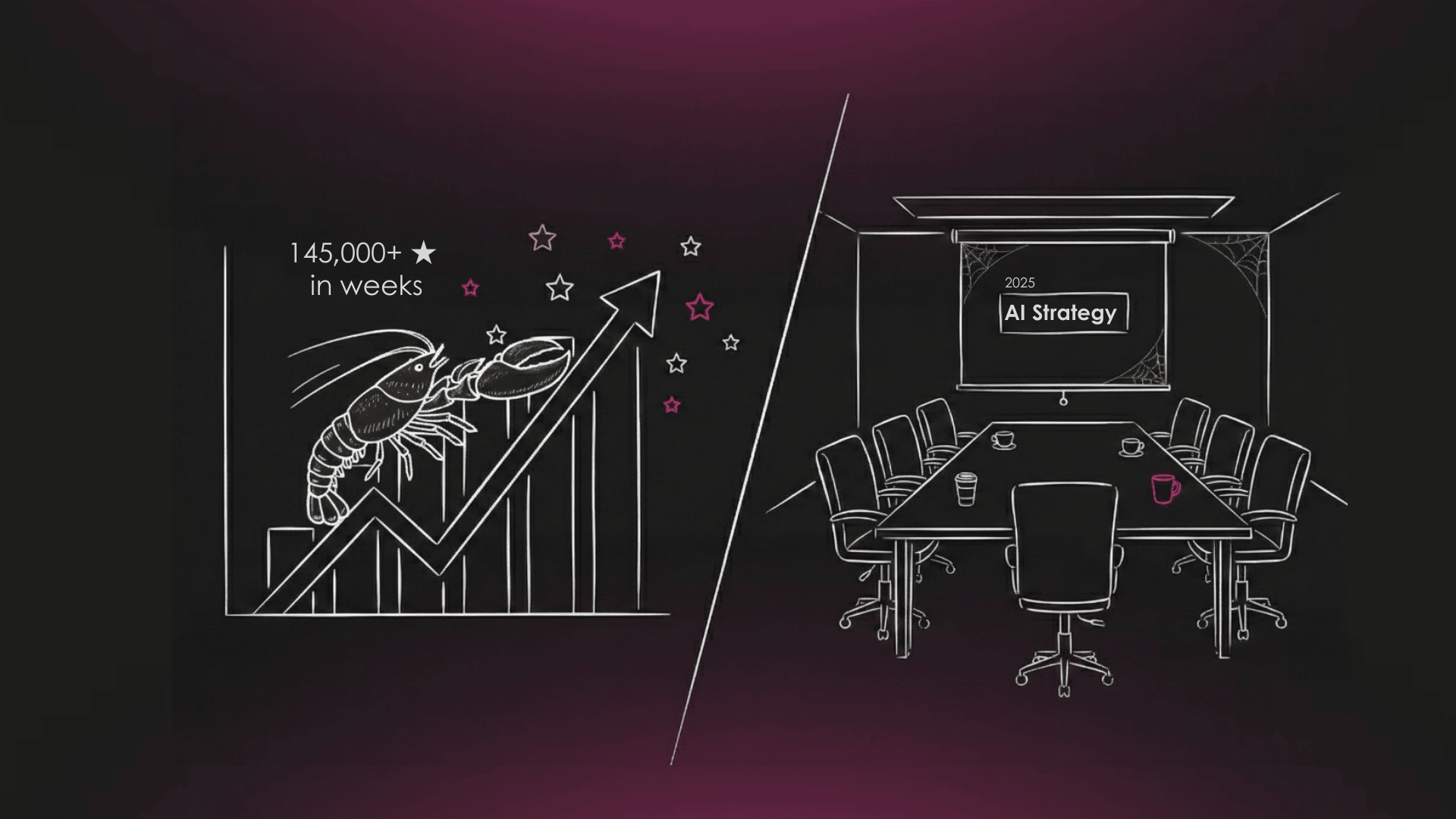

Last month, an open-source AI agent called OpenClaw accumulated 145,000 GitHub stars in weeks. It can book restaurants, manage calendars, and commit code without being asked twice. Researchers have also demonstrated full system compromise via a single malicious email, in under five minutes.

We're not suggesting you install it. But the speed at which 145,000 developers rushed toward something that dangerous is worth taking seriously, because it tells you something about the state of enterprise AI that your quarterly review probably doesn't.

OpenClaw delivers what Siri, Alexa, and enterprise Copilots have promised for a decade but never shipped: an assistant that actually assists. When one user found no availability on OpenTable, the agent downloaded voice synthesis software, called the restaurant, and booked the table. No follow-up prompt. No human intervention. Just a result.

That capability exists. It's available today. And your employees already know it.

The problem isn't that your organization is behind on AI. It's that three compounding structural debts have built up quietly while you were focused on deployment counts and cost-per-seat metrics. Each debt makes the next one harder to close. Together, they explain why most enterprise AI programs are generating activity but not impact.

.png)

1. The Expectations Debt

Most leadership teams believe they understand how their employees feel about AI. The data consistently suggests otherwise. BCG and Columbia Business School found that while 76% of executives believe their staff is enthusiastic about AI, only 31% of individual contributors are. That 45-point gap isn't skepticism. It's disappointment.

Employees already know what "good" looks like. They use consumer tools at home. When they see an AI agent autonomously recover from a failed task or generate production-quality code in minutes, their internal benchmark shifts. Then they sit down at their enterprise tooling and feel the gap.

Microsoft's Work Trend Index found that 75% of knowledge workers already use AI at work, with 78% reporting they bring their own tools when official options fall short. The enterprise tools aren't losing a feature comparison. They're losing a trust comparison.

What to do about it: The Expectations Debt compounds when it stays invisible. The first move is to make the gap measurable. Benchmark your official AI tooling against the consumer equivalent on two or three real tasks your teams actually perform. Don't bury the results in an IT procurement review. Bring them to leadership with a direct question: is this the gap we're comfortable with? Making the gap visible changes the conversation from "why aren't people adopting our tools" to "what specifically are our tools failing to do."

.png)

2. The Discovery Debt

The natural response to the previous finding is to crack down on unauthorized tool use. Understandable. Shadow AI creates real exposure: unauthorized tools accessing company data through personal accounts, outputs that can't be audited, integrations that bypass security controls entirely.

But the organizations treating Shadow AI as purely a security problem are missing its most valuable signal. When an employee reaches for an unapproved tool to solve a work problem, they are showing you exactly where your official tooling falls short. They've already done the use-case discovery. They've already validated that there's a workflow worth automating. You're just not capturing any of it.

This is the Discovery Debt: the gap between where value is being found at the ground level and where decisions are being made at the top.

Scania, the truck manufacturer, offers a useful model. Rather than implementing a blanket ban, they required teams to apply for ChatGPT Enterprise access together, defining their collective use cases as part of the application. What was previously opaque shadow usage became a structured intake of real organizational demand. Security posture improved because the tools were now sanctioned and monitored, and the organization gained a clear map of where AI could actually create value.

What to do about it: Before you block an unauthorized tool, capture why people are using it. A lightweight intake process, even a simple form asking "what problem were you trying to solve," turns a security event into organizational intelligence. Build that mechanism before you need it. The goal isn't to approve every tool. It's to make sure the demand signal reaches the people who set your AI roadmap.

.png)

3. The Accountability Debt

This is where the compounding effect becomes critical. Even if you close the Expectations Debt by improving your tooling, and close the Discovery Debt by surfacing real use cases, you will still stall when the use cases require agents that can actually act.

A useful AI agent needs authority to do things: send an email, move a file, approve a transaction, update a record. That authority conflicts with decades of established security boundaries. And so the request gets routed. IT flags the security exposure. Legal flags the liability. Compliance flags the audit gap. Nobody is wrong, exactly. But the default answer to any high-capability AI request becomes "no," not because the risk was evaluated and rejected, but because no one is measured on saying yes.

Mindgard's 2025 survey of cybersecurity professionals found that 39% said "no one" owns AI risk in their organization. That finding makes sense when you look at the incentive structure. The people who control access aren't evaluated on value creation. The people who see the value don't control access. The result is a permanent standoff dressed up as governance.

Gartner predicts that 40% of agentic AI projects will be canceled by 2027 due to "inadequate risk controls." Note the precise phrasing. Not because the risks were too high. Because the controls weren't good enough to manage them. The projects didn't fail on capability. They failed on accountability.

What to do about it: The fix isn't a new policy. It's a new kind of owner. Someone, at the process level, needs to be accountable for both the capability and the exposure. Not a CISO whose incentive is to minimize risk, and not a CDO whose incentive is to maximize adoption. An owner who carries both. This doesn't require a new org chart. It requires an explicit decision about who holds the trade-off and what they're measured on. In our experience, the organizations that make that decision explicitly, even imperfectly, move significantly faster than those that leave it implicit.

Moving Forward

These three debts start compounding over time. Suppressed expectations make it harder to surface use cases. Missing use-case intelligence makes it harder to justify high-capability agents. And without someone accountable for the capability-risk trade-off, even well-justified requests disappear into approval queues.

OpenClaw is a useful mirror. It went viral precisely because it removed every one of these friction points. No expectations gap: it does what you'd hope. No discovery gap: the use cases are obvious the moment you see it work. No accountability gap: it's open-source, it acts, deal with the consequences later.

You can't replicate that inside an enterprise, nor should you. But you can ask whether your current structure makes it easier to block capable AI than to deploy it responsibly. If the answer is yes, the demand doesn't disappear. It goes underground, where you get the risk without the governance, and none of the value.

The goal isn't to lower the bar on security. It's to make sure someone in your organization owns both sides of the trade-off, not just the side that protects them from blame. Reach out if you want to have a conversation on your organisation's challenges and set a clear direction towards creating more value out of your data projects.

.png)

.png)

.png)